The photo exists. It always existed. The archive just wouldn't give it up.

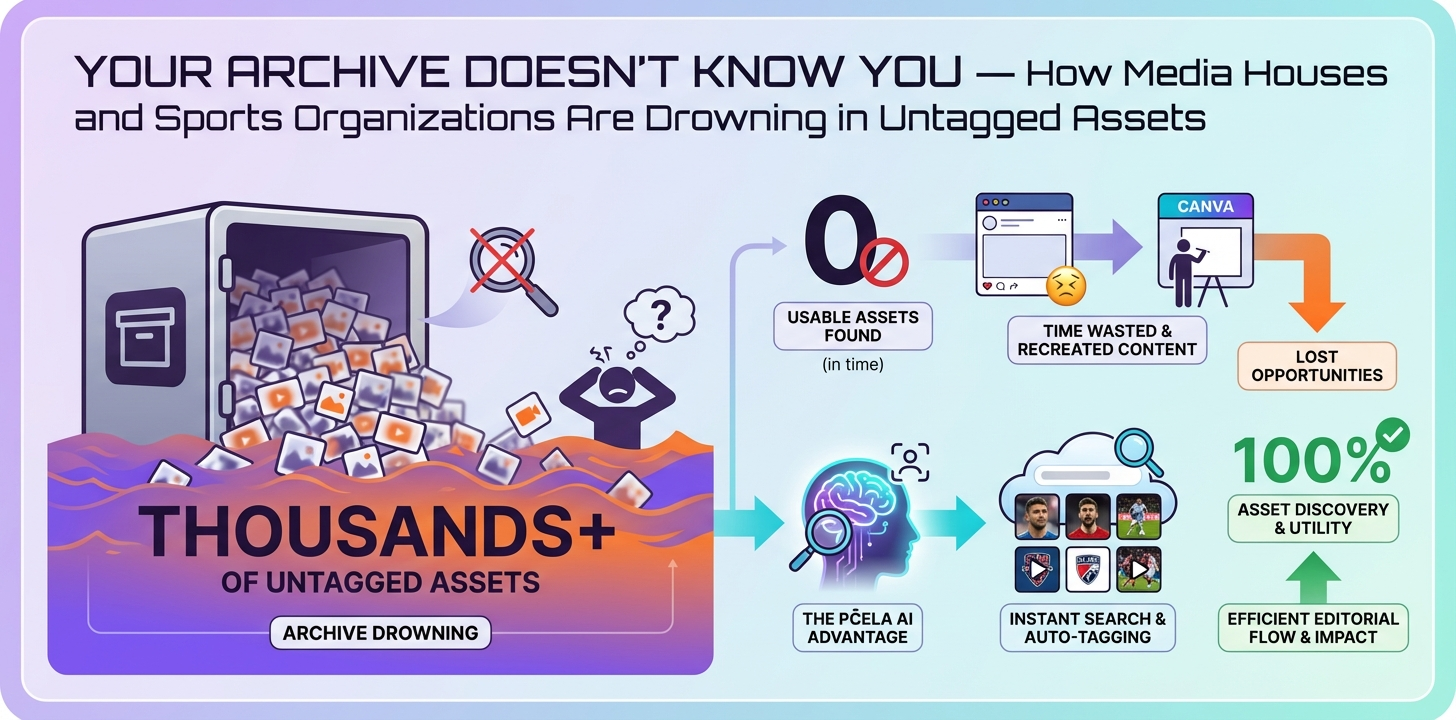

This is not a story about bad employees or poor discipline. It is a story about what happens when media asset management scales beyond what human-enforced taxonomy can hold together - and why the real cost never shows up on the storage invoice.

Section 1: The Discovery Tax - The Cost Nobody Is Measuring

Every organization tracks storage costs. Almost none track the compounding cost of content that exists but cannot be found.

Call it the Discovery Tax. It accumulates in the gap between "we produced this" and "we can use this" - paid out in editorial hours spent on failed searches, in licensing fees for assets the team already owns, in re-shoots that duplicate work done six months ago, and in publishing windows missed because the right frame was buried three folders deep under a naming convention someone invented in 2019 and nobody else ever fully understood.

Industry research into AI-powered media asset management consistently points to significant time savings on content and branding work - with AI-powered DAM systems saving teams an average of 11 to 18 hours per week on branding and content initiatives (MediaValet). Those figures point directly to where the time is actually going before those systems are in place.

And the market is paying attention. The media asset management sector is projected to reach $2.39 billion in 2026, driven largely by organizations that have finally named the problem they have been absorbing silently.

But the Discovery Tax has a second layer that is harder to quantify. It lives inside one person.

Most high-volume media archives have a long-tenured staff member who knows where everything is. They remember the folder the Copa final footage ended up in after the server migration. They know which photographer's drive holds the pre-season portraits from 2021. This person is not a luxury. They are, effectively, the archive's only functional search engine. When they leave - and eventually, they leave - the institutional memory leaves with them. What remains is a folder structure that made sense to one person, in a specific historical context, that no longer exists.

That is a single point of failure dressed up as institutional knowledge.

Section 2: Why Your Current Media Asset Management System Is Making It Worse

Here is the uncomfortable truth about folder-based archives and legacy digital asset management platforms: they work fine until they don't, and the point at which they stop working is invisible until it has already cost you something.

Folder hierarchies and manual naming conventions look like systems. At small scale, they behave like systems. But they depend entirely on consistent human behavior across every upload, every contributor, every deadline sprint, every staff transition. Deadline pressure is the first thing to erode that consistency. Multi-contributor ingestion is the second. Staff turnover does the rest.

Keyword-based search has the same structural ceiling. It can only surface what was manually tagged at the moment of upload. In high-volume environments - a broadcaster ingesting match footage daily, a sports organization processing photographer submissions after every fixture - manual tagging is the first step to be skipped when time is short. Which means the archive's searchability degrades in direct proportion to the team's output volume. The busier the editorial team, the less findable the archive becomes.

Platforms like Bynder and Canto have documented performance issues at large library sizes, where keyword search limitations become operationally significant. This is not a criticism specific to those products. It is a structural characteristic of any system that treats files as static objects with attached labels rather than as assets with queryable attributes.

The difference matters. A label-based system answers the question "what did someone type about this file?" A queryable system answers "find all photos of this player, in an away kit, from matches in the second half of last season." Those are not the same question, and only one of them is useful when you have a publishing window closing in ninety minutes.

One more objection worth addressing directly: "We have a system - it just isn't being used correctly."

A system that requires perfect human behavior to function is not a system. It is a policy. Policies do not scale.

Section 3: What "Searchable" Actually Means at Scale

Keyword matching and semantic indexing are not the same thing. The distinction is worth being precise about.

Keyword matching finds "goalkeeper save" because someone typed those words into a metadata field. Semantic indexing understands what is inside the file - the visual content, the scene context, the faces present, the words spoken - and generates that metadata automatically, without a human intermediary. One depends on what people remember to write. The other depends on what the system can read.

Auto-tagging transforms the ingestion moment structurally. Instead of requiring a human to describe an asset before it becomes discoverable, the system analyzes the asset on arrival. Objects, scenes, and faces are identified. Categories are assigned. The asset enters the archive already indexed, already searchable, already findable by people who had nothing to do with the upload.

Face recognition is where this gets specific. A generic face recognition model knows famous faces - the kind of recognition trained on publicly available datasets. But a sports organization does not primarily need to find photographs of globally famous athletes. It needs to find photographs of its own squad, its own coaching staff, its own academy players - people who may be known within the organization but not indexed in any public model.

This is what semantic indexing looks like in a production environment. Pčela uses a dual approach: a pre-existing recognition database combined with client-trained recognition built from the organization's own asset library. The system learns to recognize the specific people that matter to that organization - an under-23 squad member, a recently signed midfielder, a sports director who appears in every press conference but nowhere in a public dataset. That is not a generic capability bolted onto a storage layer. It is face recognition in media workflows designed around how sports and media organizations actually operate.

Speech-to-text transcription adds a third dimension to this. A post-match press conference video, indexed only by filename and upload date, is practically unsearchable. The same video, with its spoken content transcribed and integrated into the search index, becomes findable by anything said inside it - a player's name, a tactical phrase, a specific question from a journalist. The internal content of audio-visual assets becomes as findable as any text document.

Then there is faceted search. The structural answer to "I know it exists but I cannot describe it precisely enough." Filter simultaneously across people, places, topics, usage rights, and orientation. Narrow from thousands of assets to a precise result in seconds, without knowing the filename, the upload date, or the photographer's name.

Section 4: The Rights Problem - When Untagged Assets Become Legal Exposure

Discovery failure is expensive. Rights failure is a different category of problem.

An asset found and reused without rights verification is not just an operational mistake. For EU organizations, it is a potential GDPR violation, a licensing breach, or both. And the scenario where this happens is rarely negligence. More often, the rights metadata was never attached at upload, or it was attached and then separated from the file during a migration, or it lives in a spreadsheet that three people knew about and one of them left two years ago.

Image rights in sports media are particularly tangled. Player contracts, third-party photographer licensing, broadcast exclusivity windows, and sponsor restrictions can all affect whether a given asset is cleared for a given use. An archive that cannot answer "is this cleared for social media in Germany this week?" is not functioning as a media asset management system. It is functioning as a liability stored in a folder.

Rights-aware search is non-negotiable for any organization operating seriously in the EU regulatory environment. And rights metadata is only useful if it travels with the asset, surfaces in search results, and is queryable at the moment of use - not retrievable from a separate system after the fact.

The infrastructure question is not separate from the compliance question. For European organizations handling biometric data - which face recognition data legally is, under GDPR - routing that data through non-EU cloud providers creates a compliance exposure that has nothing to do with how the system performs. It is a jurisdictional problem. Pčela is hosted on Hetzner infrastructure, within the EU, with full GDPR compliance built into the deployment architecture. That is not a marketing position. It is a deployment decision with direct legal significance for any organization that takes its regulatory obligations seriously - and for organizations in EU sports media, the obligations are not theoretical.

Section 5: From Liability to Asset - What AI-First Indexing Looks Like in Practice

Picture the workflow without the Discovery Tax. An asset arrives in Cloud DAM. The system analyzes it on ingestion - faces recognized against both a global reference database and the organization's own trained model, objects and scenes tagged automatically via auto-tagging, any spoken content transcribed and indexed. By the time the upload confirmation fires, the asset is already searchable by person, by place, by topic, and by rights status. No human intervention required. No tagging queue accumulating overnight.

This is what Pčela was built to do.

Not a generic cloud storage layer with search functionality appended. Not a conventional digital asset management platform adapted for media use. Pčela is an AI-first Cloud DAM built for the volume and operational complexity of sports and media organizations - the kind of environment where a single match day generates hundreds of assets across multiple contributors, all of which need to be findable the following morning by every member of the editorial team.

The auto-tagging handles what manual workflows cannot sustain at scale. The faceted search architecture - queryable across people, places, topics, rights status, and orientation simultaneously - answers the "I know it exists" searches that flat keyword systems cannot resolve. And the LitteraWorks transcription integration treats audio-visual content as structured, queryable data rather than opaque files. A video interview, a post-match press conference, a podcast episode - all of it becomes searchable by spoken word, indexed at the same depth as any text document in the archive.

Industry data shows that 73% of sports organizations plan to use AI specifically for content creation and distribution in 2026 (SportsPro / Sportradar). The infrastructure question - where does that AI run, whose data does it process, under what regulatory framework - is the question that separates organizations that can actually deploy these capabilities from those that are still evaluating.

AppWorks works with 50+ organizations across four continents. The pattern teams encounter consistently: the cost of migrating a legacy archive feels significant until it is placed next to the Discovery Tax being paid every day the archive stays unsearchable. The question is never really whether migration is expensive. The question is whether the status quo costs more. And it almost always does - it just costs in hours and missed opportunities rather than in line items on an invoice.

The Archive Has Already Paid for Itself - You Just Can't Find the Receipt

Every asset your team produced last season represents budget already spent. The match photography, the behind-the-scenes footage, the press conference recordings, the training ground content - that investment is sitting in storage right now. Whether it returns anything depends entirely on whether your editorial team can find it when they need it.

The Discovery Tax does not announce itself. It accumulates quietly in editorial workarounds, in Canva files that exist because the archive failed, in licensing costs for content the organization already owns, in institutional knowledge that walked out the door with someone's last paycheck.

AI-powered media asset management is not a future consideration. For organizations producing content at any serious volume, it is the structural answer to a problem that manual taxonomy was never designed to solve. And with 82% of C-suite leaders expecting a higher level of organizational change in 2026 compared to the previous year (Accenture), the window for building the right infrastructure before the pressure intensifies is narrowing.

If your archive is growing faster than your team's ability to find things inside it, the Discovery Tax is already running. Pčela indexes, tags, and makes your entire media library searchable - by face, by spoken word, by rights status, by every attribute that matters at the moment of use. Book a demo and bring your hardest search problem. The conversation starts with listening to what your archive currently cannot do.